Projects

End-to-end implementations spanning calibration, localization, 3D reconstruction, and deep learning perception.

Calibration

Multi-Sensor

C++

Oct 2025 – Dec 2025

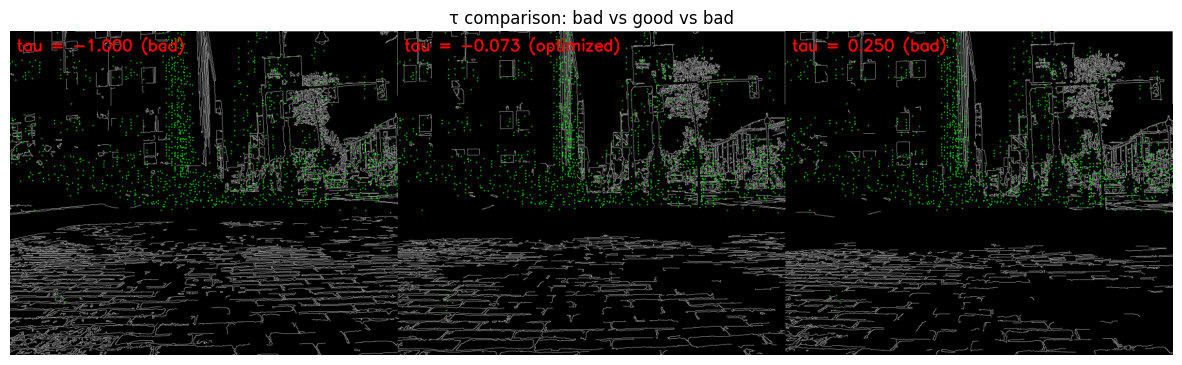

Camera–LiDAR Temporal Calibration

Targetless temporal calibration pipeline estimating real-world camera–LiDAR time offset on unsynchronized ROS data via cross-modal edge alignment optimization. Edge-based scoring using Canny detection and distance transforms; estimated 70ms offset validated via Powell optimization and dense grid search cross-validation. IMU preintegration merges 3 consecutive scans for motion-compensated point cloud densification.

70ms offset validated via Powell + grid search

3 scans merged via IMU preintegration

State Estimation

EKF

Sep 2025 – Oct 2025

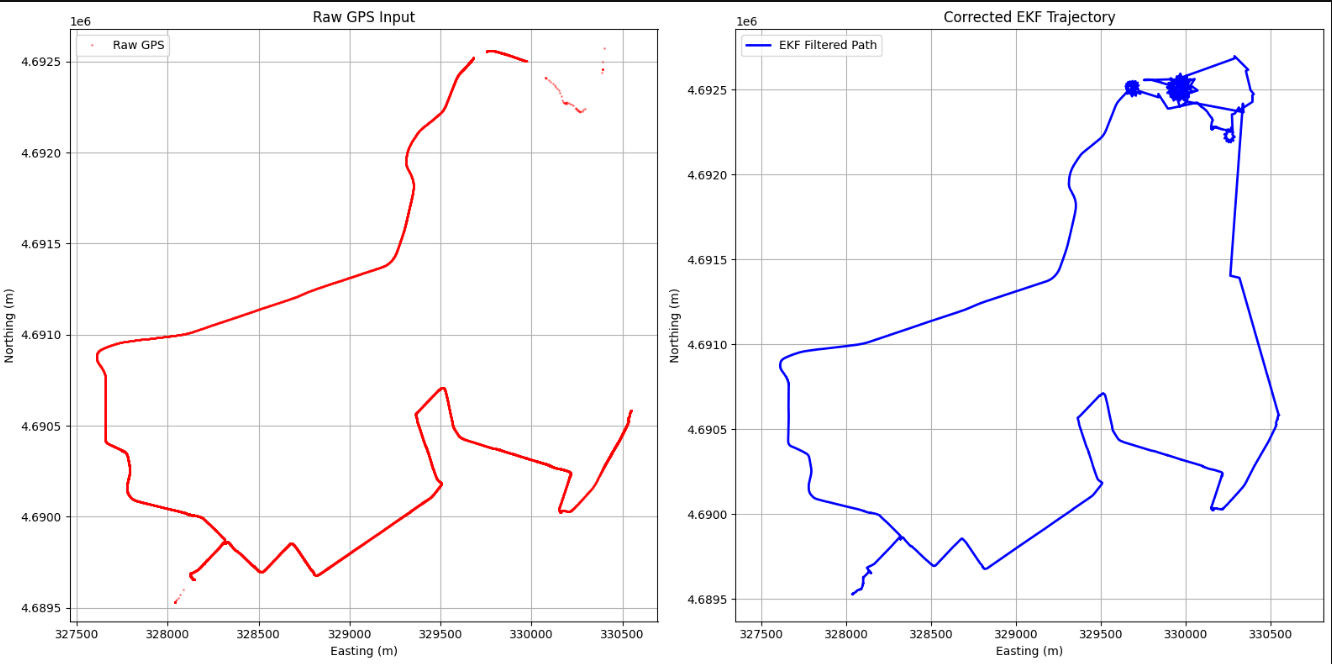

GPS–IMU Fusion via Extended Kalman Filter

Real-time state estimation pipeline fusing 200 Hz IMU with GNSS. Implements prediction + measurement update steps; IMU-only dead reckoning in GPS-denied zones with adaptive covariance weighting for degraded signals.

200 Hz IMU · GPS fusion

Continuous localization in tunnels

SLAM

ROS2

Sep 2025 – Oct 2025

Indoor 3D Mapping with RTAB-Map

Visual SLAM pipeline on ZED Mini stereo camera via RTAB-Map in ROS2. Deployed on underground tunnels; validated loop closure quality, visual odometry drift, and pose graph consistency across long trajectories.

90% ground truth alignment

3D Reconstruction

Computer Vision

Oct 2025 – Nov 2025

3D Reconstruction via Structure from Motion

Full SfM pipeline on 24 monocular images using SIFT features and RANSAC. Non-linear bundle adjustment over 1,477 landmarks improved accuracy by 26%. Camera localization in GPS-denied settings via essential matrix decomposition and PnP pose estimation.

26% accuracy improvement via bundle adjustment

1,477 landmarks · 24 monocular images

3D Reconstruction

Neural Rendering

CUDA

Jan 2026 – Mar 2026

3D Gaussian Splatting from Scratch

From-scratch implementation of 3DGS on a Buddha statue dataset using COLMAP-based SfM reconstruction. Gaussian parameters optimized via differentiable rasterization with EWA splatting; densification via clone/split/prune strategy. Achieved 31.42 dB PSNR and SSIM 0.9531 at 7,500 iterations — demonstrating that early stopping outperforms 30k iterations by over 16 dB on small datasets due to Gaussian overfitting. Containerized with Docker and CI/CD via GitHub Actions.

31.42 dB PSNR · SSIM 0.9531 at 7,500 iterations

16 dB improvement over 30k checkpoint on 26-image dataset

SLAM

Factor Graphs

Sep 2025 – Oct 2025

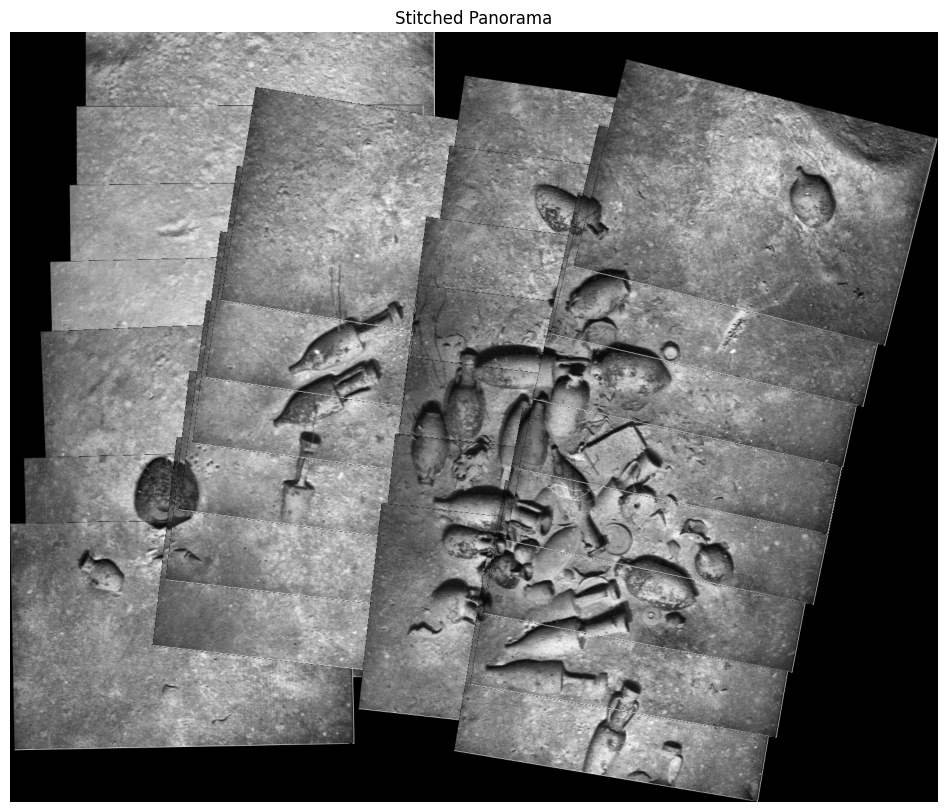

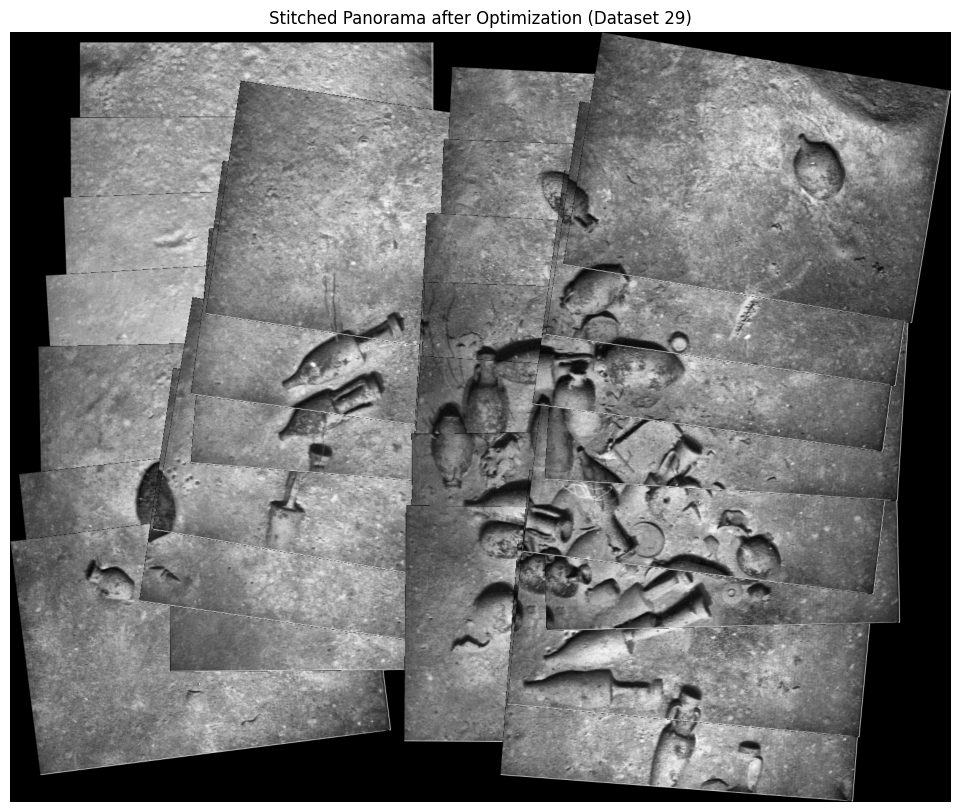

Underwater Image Mosaicing with Factor Graphs

Seamless 2D mosaic using GTSAM factor graph optimization on 28 underwater images. Loop closure constraints on SIFT-matched overlapping pairs eliminate map drift; robust homography estimation in low-light, feature-sparse conditions.

28 underwater images · zero drift

Computer Vision

YOLOv8

Oct 2025 – Dec 2025

Semantic Color Constancy via Object-Specific Priors

Object-aware chromaticity estimation to improve color accuracy under varying illumination. Fine-tuned YOLOv8 on 5,600 COCO-derived images; produces corrected outputs across 80 object categories at 130ms CPU inference.

63% color accuracy improvement

130ms CPU inference · 80 categories

Computer Vision

U-Net

Sep 2025 – Oct 2025

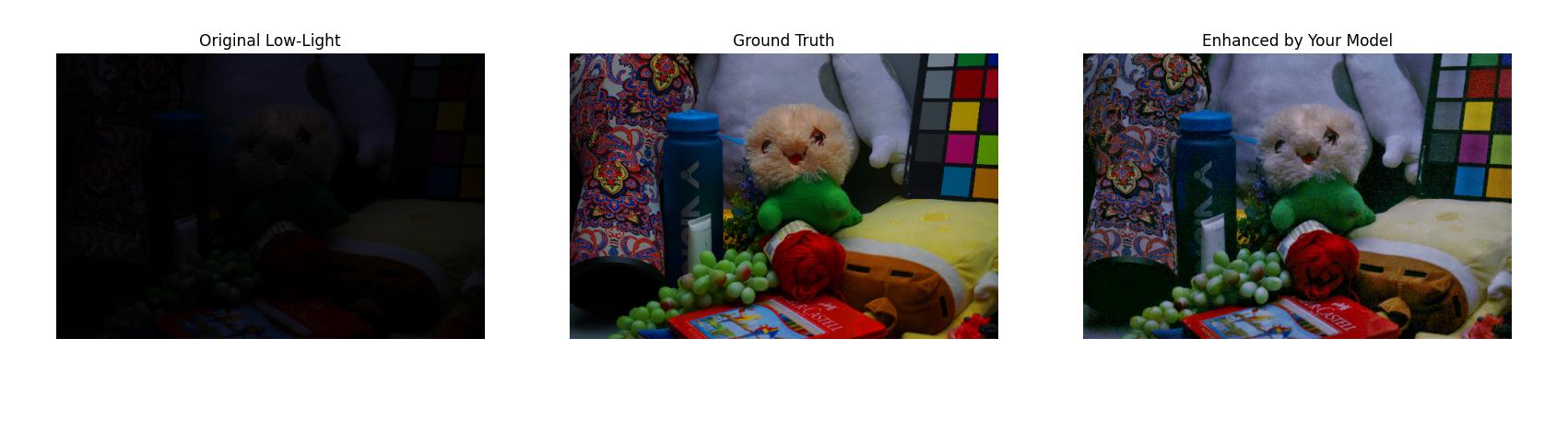

Low-Light Image Enhancement with U-Net

Hybrid U-Net architecture for joint denoising and exposure correction on the LOLv1 benchmark. Perceptual loss + L1 loss eliminates color cast artifacts and preserves structural detail.

19.37 dB PSNR (+17% over baseline)

Edge Deployment

YOLOv5

Jan 2024 – Apr 2024

Real-Time Wall Surface Defect Detection

YOLOv5 deployed on NVIDIA Jetson Nano for real-time crack, bubble, and scratch detection. Custom 1,300-image dataset; Intel RealSense depth sensing for 3D defect localization to distinguish surface vs. structural defects.

91.9% precision on Jetson Nano